And because Airflow can connect to a variety of data sources – APIs, databases, data warehouses, and so on – it provides greater architectural flexibility. Prior to the emergence of Airflow, common workflow or job schedulers managed Hadoop jobs and generally required multiple configuration files and file system trees to create DAGs (examples include Azkaban and Apache Oozie ).īut in Airflow it could take just one Python file to create a DAG. There are also certain technical considerations even for ideal use cases. Backups and other DevOps tasks, such as submitting a Spark job and storing the resulting data on a Hadoop clusterĪs with most applications, Airflow is not a panacea, and is not appropriate for every use case.Machine learning model training, such as triggering a SageMaker job.Building ETL pipelines that extract batch data from multiple sources, and run Spark jobs or other data transformations.Handling data pipelines that change slowly (days or weeks – not hours or minutes), are related to a specific time interval, or are pre-scheduled.Automatically organizing, executing, and monitoring data flow.And you have several options for deployment, including self-service/open source or as a managed service. Airflow’s visual DAGs also provide data lineage, which facilitates debugging of data flows and aids in auditing and data governance. The Airflow UI enables you to visualize pipelines running in production monitor progress and troubleshoot issues when needed. You add tasks or dependencies programmatically, with simple parallelization that’s enabled automatically by the executor. A scheduler executes tasks on a set of workers according to any dependencies you specify – for example, to wait for a Spark job to complete and then forward the output to a target. create and manage scripted data pipelines as code (Python)Īirflow organizes your workflows into DAGs composed of tasks.run workflows that are not data-related.orchestrate data pipelines over object stores and data warehouses.Astronomer.io and Google also offer managed Airflow services.

(And Airbnb, of course.) Amazon offers AWS Managed Workflows on Apache Airflow (MWAA) as a commercial managed service. According to marketing intelligence firm HG Insights, as of the end of 2021 Airflow was used by almost 10,000 organizations, including Applied Materials, the Walt Disney Company, and Zoom. Airflow’s proponents consider it to be distributed, scalable, flexible, and well-suited to handle the orchestration of complex business logic. Written in Python, Airflow is increasingly popular, especially among developers, due to its focus on configuration as code. Airbnb open-sourced Airflow early on, and it became a Top-Level Apache Software Foundation project in early 2019. Apache Airflow – an open-source workflow management systemĪirflow was developed by Airbnb to author, schedule, and monitor the company’s complex workflows.ETL pipeline – the process of moving and transforming data.Apache Kafka – an open-source streaming platform and message queue.You manage task scheduling as code, and can visualize your data pipelines’ dependencies, progress, logs, code, trigger tasks, and success status. There’s no concept of data input or output – just flow. Airflow enables you to manage your data pipelines by authoring workflows as Directed Acyclic Graphs (DAGs) of tasks. The full technical report is available for download at this link.Īpache Airflow is a powerful and widely-used open-source workflow management system (WMS) designed to programmatically author, schedule, orchestrate, and monitor data pipelines and workflows.

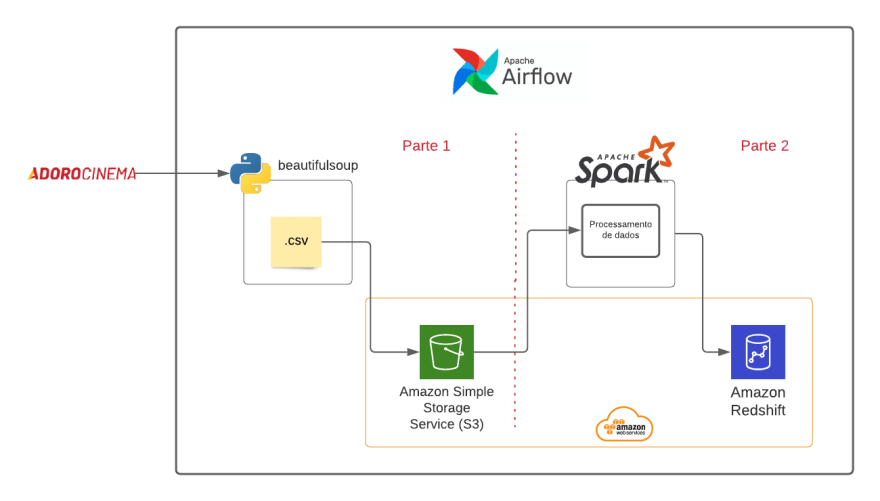

This article below is part of our detailed technical report titled “ Orchestrating Data Pipeline Workflows With and Without Apache Airflow.” In it, we conduct a comprehensive analysis of Airflow’s capabilities compared to alternative solutions. Eliminating Complex Orchestration with Upsolver SQLake’s Declarative Pipelines.Reasons Managing Workflows with Airflow can be Painful.While Spark is certainly scalable, Airflow’s flexibility stands out, making it ideal for managing intricate workflows across diverse data sources and platforms. Spark, on the other hand, excels in distributed data processing and analysis.

Both Airflow and Spark are part of the vibrant open source ecosystem, providing access to their robust features without the shackles of licensing fees.īoth tools cater to the demands of data engineering and machine learning workflows, making them versatile choices for data-driven projects.īoth Airflow and Spark enable the creation, scheduling, and management of workflows, albeit with differing approaches.Īirflow primarily focuses on orchestrating workflows and managing task dependencies.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed